深度学习基础–SOFTMAX回归(单层神经网络)

最近在阅读一本书籍–Dive-into-DL-Pytorch(动手学深度学习),链接:https://github.com/newmonkey/Dive-into-DL-PyTorch,自身觉得受益匪浅,在此记录下自己的学习历程。

本篇主要记录关于SOFTMAX回归的知识。softmax回归和线性回归一样都属于单层神经网络;线性回归主要适用于回归问题,而softmax回归主要使用于分类问题。本文主要尝试对手写数字进行识别。sofemax函数又叫归一化指数函数。

1 收集数据集

我们通过torchvision的 torchvision.datasets 来下载这个手写数字识别数据集MNIST。可以获得60000个训练集样本数与10000个测试集样本数。import torch

import torchvision

import numpy as np

def load_data_fashion_mnist(batch_size, root='本地地址url'):

transform = torchvision.transforms.ToTensor()

mnist_train = torchvision.datasets.MNIST(root=root, train=True, download=True, transform=transform)

mnist_test = torchvision.datasets.MNIST(root=root, train=False, download=True, transform=transform)

train_iter = torch.utils.data.DataLoader(mnist_train, batch_size=batch_size, shuffle=True)

test_iter = torch.utils.data.DataLoader(mnist_test, batch_size=batch_size, shuffle=False)

return train_iter,test_iter

batch_size=256

train_iter,test_iter=load_data_fashion_mnist(batch_size)

2 定义和初始化模型

每个样本的shape为[1,28,28],即通道数为1,高和宽都为为28像素的图像。故模型的输入向量的长度是784。softmax回归的输出层是⼀个全连接层,所以我们⽤⼀个线性模块就可以了。import torch.nn as nn

num_inputs = 784

num_outputs = 10

class LinearNet(nn.Module):

def __init__(self, num_inputs, num_outputs):

super(LinearNet, self).__init__()

self.linear = nn.Linear(num_inputs, num_outputs)

def forward(self, x): # x shape: (batch, 1, 28, 28)

y = self.linear(x.view(x.shape[0], -1))

return y

net = LinearNet(num_inputs, num_outputs)

print(net)

init.normal_(net.linear.weight, mean=0, std=0.01)

init.constant_(net.linear.bias, val=0)

3 sofemax和交叉熵损失函数

PyTorch提供了⼀个包括softmax运算和交叉熵损失计算的函数。loss=nn.CrossEntropyLoss()

4 定义优化算法

采用学习率为0.005的⼩批量随机梯度下降(SGD)为优化算法。optimizer = torch.optim.SGD(net.parameters(), lr=0.005)

5 训练模型

迭代周期设置为10,模型训练。num_epochs = 10

def evaluate_accuracy(data_iter, net):

acc_sum, n = 0.0, 0

for X, y in data_iter:

acc_sum += (net(X).argmax(dim=1) == y).float().sum().item()

n += y.shape[0]

return acc_sum / n

def train_ch3(net, train_iter, test_iter, loss, num_epochs, batch_size,params=None, lr=None, optimizer=None):

for epoch in range(num_epochs):

train_l_sum, train_acc_sum, n = 0.0, 0.0, 0

for X, y in train_iter:

y_hat = net(X)

l = loss(y_hat, y).sum()

# 梯度清零

if optimizer is not None:

optimizer.zero_grad()

elif params is not None and params[0].grad is not None:

for param in params:

param.grad.data.zero_()

l.backward()

if optimizer is None:

sgd(params, lr, batch_size)

else:

optimizer.step() # “softmax回归的简洁实现”一节将用到

train_l_sum += l.item()

train_acc_sum += (y_hat.argmax(dim=1) == y).sum().item()

n += y.shape[0]

test_acc = evaluate_accuracy(test_iter, net)

print('epoch %d, loss %.4f, train acc %.3f, test acc %.3f'% (epoch + 1, train_l_sum / n, train_acc_sum / n, test_acc))

train_ch3(net, train_iter, test_iter, loss, num_epochs,batch_size, None, None, optimizer)

#结果

#epoch 1, loss 0.0071, train acc 0.675, test acc 0.788

#epoch 2, loss 0.0050, train acc 0.795, test acc 0.821

#epoch 3, loss 0.0040, train acc 0.819, test acc 0.837

#epoch 4, loss 0.0034, train acc 0.832, test acc 0.846

#epoch 5, loss 0.0031, train acc 0.841, test acc 0.855

#epoch 6, loss 0.0028, train acc 0.848, test acc 0.860

#epoch 7, loss 0.0026, train acc 0.853, test acc 0.865

#epoch 8, loss 0.0025, train acc 0.857, test acc 0.868

#epoch 9, loss 0.0024, train acc 0.860, test acc 0.871

#epoch 10, loss 0.0023, train acc 0.863, test acc 0.874

6 预测

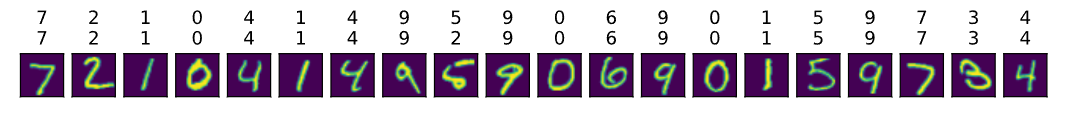

训练完成后,现在就可以演示如何对图像进⾏分类了。第⼀⾏为真实标签,第⼆⾏为预测标签,第三行为图像。from IPython import display

import matplotlib.pyplot as plt

X, y = iter(test_iter).next()

def get_fashion_mnist_labels(labels):

text_labels = ['0', '1', '2', '3', '4','5', '6', '7', '8', '9']

return [text_labels[int(i)] for i in labels]

def show_fashion_mnist(images, labels):

#use_svg_display()

display.display_svg()

# 这⾥的_表示我们忽略(不使⽤)的变量

_, figs = plt.subplots(1, len(images), figsize=(12, 12))

for f, img, lbl in zip(figs, images, labels):

f.imshow(img.view((28, 28)).numpy())

f.set_title(lbl)

f.axes.get_xaxis().set_visible(False)

f.axes.get_yaxis().set_visible(False)

plt.show()

true_labels = get_fashion_mnist_labels(y.numpy())

pred_labels =get_fashion_mnist_labels(net(X).argmax(dim=1).numpy())

titles = [true + '\n' + pred for true, pred in zip(true_labels,pred_labels)]

show_fashion_mnist(X[0:20], titles[0:20])

预测结果展示:(第⼀⾏为真实标签,第⼆⾏为预测标签,第三行为图像)

END!