YOLOV8改进:如何增加注意力模块?(以CBAM模块为例)

前言YOLOV8nn文件夹modules.pytask.py models文件夹总结

前言

因为毕设用到了YOLO,鉴于最近V8刚出,因此考虑将注意力机制加入到v8中。

YOLOV8

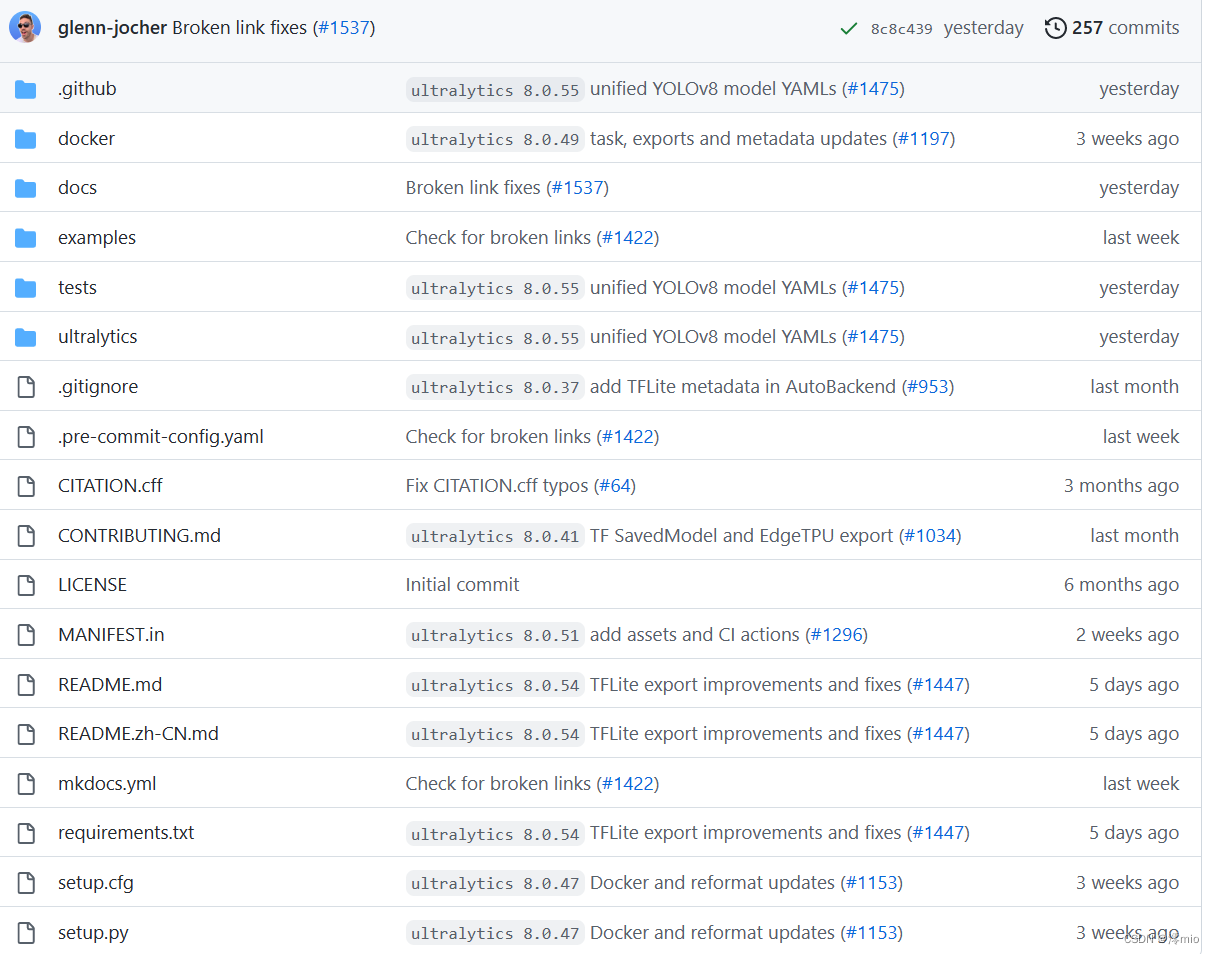

代码地址:YOLOV8官方代码

使用pip安装或者clone到本地,在此不多赘述了。下面以使用pip安装ultralytics包为例介绍。

进入ultralytics文件夹

nn文件夹

再进入nn文件夹。

-- modules.py:在里面存放着各种常用的模块,如:Conv,DWConv,ConvTranspose,TransformerLayer,Bottleneck等-- tasks.py: 在里面导入了modules中的基本模块组建model,根据不同的下游任务组建不同的model。modules.py

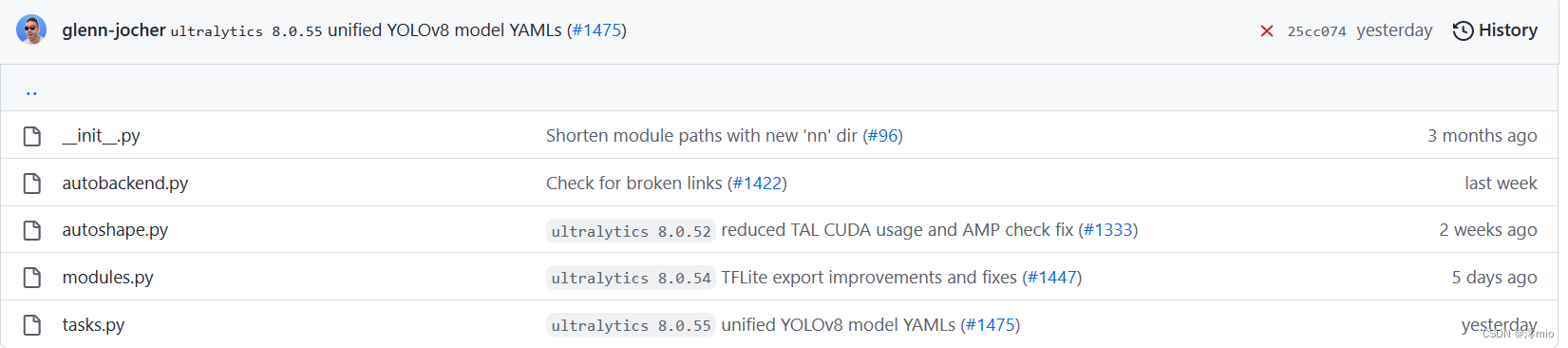

在该文件中,我们可以写入自己的注意力模块,或者使用V8已经提供的CBAM模块(见代码的CBAM类)

"""通道注意力模型: 通道维度不变,压缩空间维度。该模块关注输入图片中有意义的信息。1)假设输入的数据大小是(b,c,w,h)2)通过自适应平均池化使得输出的大小变为(b,c,1,1)3)通过2d卷积和sigmod激活函数后,大小是(b,c,1,1)4)将上一步输出的结果和输入的数据相乘,输出数据大小是(b,c,w,h)。"""class ChannelAttention(nn.Module): # Channel-attention module https://github.com/open-mmlab/mmdetection/tree/v3.0.0rc1/configs/rtmdet def __init__(self, channels: int) -> None: super().__init__() self.pool = nn.AdaptiveAvgPool2d(1) self.fc = nn.Conv2d(channels, channels, 1, 1, 0, bias=True) self.act = nn.Sigmoid() def forward(self, x: torch.Tensor) -> torch.Tensor: return x * self.act(self.fc(self.pool(x)))"""空间注意力模块:空间维度不变,压缩通道维度。该模块关注的是目标的位置信息。1) 假设输入的数据x是(b,c,w,h),并进行两路处理。2)其中一路在通道维度上进行求平均值,得到的大小是(b,1,w,h);另外一路也在通道维度上进行求最大值,得到的大小是(b,1,w,h)。3) 然后对上述步骤的两路输出进行连接,输出的大小是(b,2,w,h)4)经过一个二维卷积网络,把输出通道变为1,输出大小是(b,1,w,h)4)将上一步输出的结果和输入的数据x相乘,最终输出数据大小是(b,c,w,h)。"""class SpatialAttention(nn.Module): # Spatial-attention module def __init__(self, kernel_size=7): super().__init__() assert kernel_size in (3, 7), 'kernel size must be 3 or 7' padding = 3 if kernel_size == 7 else 1 self.cv1 = nn.Conv2d(2, 1, kernel_size, padding=padding, bias=False) self.act = nn.Sigmoid() def forward(self, x): return x * self.act(self.cv1(torch.cat([torch.mean(x, 1, keepdim=True), torch.max(x, 1, keepdim=True)[0]], 1)))class CBAM(nn.Module): # Convolutional Block Attention Module def __init__(self, c1, kernel_size=7): # ch_in, kernels super().__init__() self.channel_attention = ChannelAttention(c1) self.spatial_attention = SpatialAttention(kernel_size) def forward(self, x): return self.spatial_attention(self.channel_attention(x))如果使用V8的CBAM模块,则不需要更改modules.py的内容。如果使用自己的注意力模块,只需要在该文件后面添加对应的代码即可。

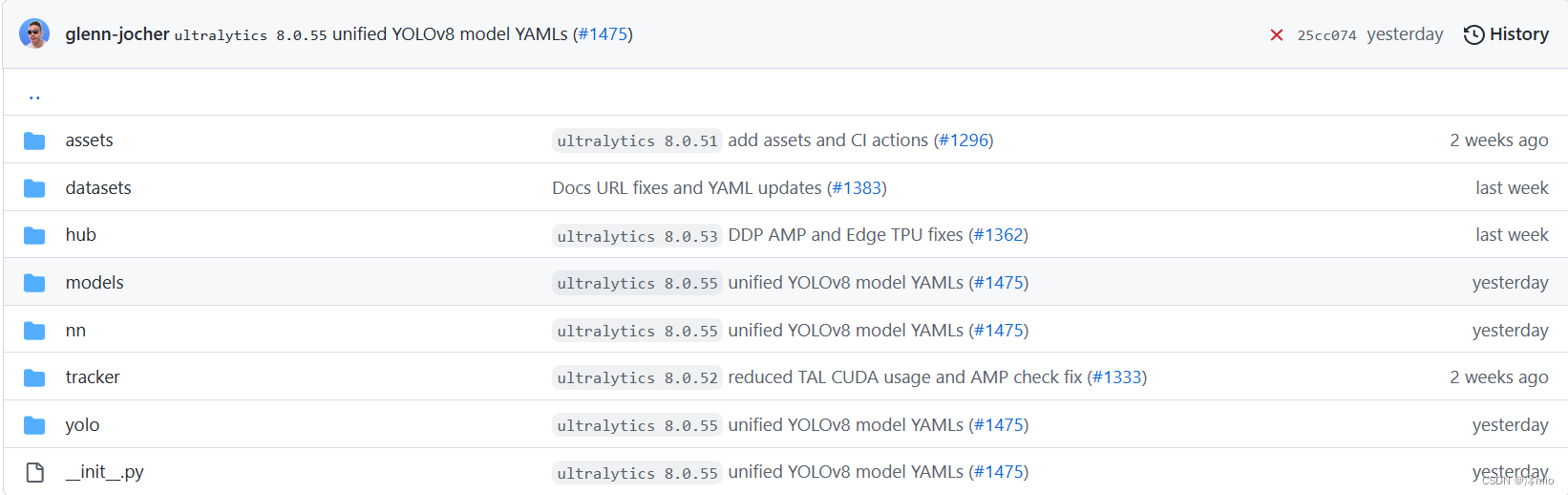

task.py

在该文件中,通过import modules.py文件中的模块来构建模型。

在文件开头导入需要的模块,可以看到modules中的很多模块在v8中并没有用到。我们在最后添加对应的CBAM模块。

from ultralytics.nn.modules import (C1, C2, C3, C3TR, SPP, SPPF, Bottleneck, BottleneckCSP, C2f, C3Ghost, C3x, Classify, Concat, Conv, ConvTranspose, Detect, DWConv, DWConvTranspose2d, Ensemble, Focus, GhostBottleneck, GhostConv, Segment, CBAM)之后修改对应的parse_model方法(对应428行)

添加分支elif m is CBAM:,具体代码如下:

def parse_model(d, ch, verbose=True): # model_dict, input_channels(3) # Parse a YOLO model.yaml dictionary if verbose: LOGGER.info(f"\n{'':>3}{'from':>20}{'n':>3}{'params':>10} {'module':<45}{'arguments':<30}") nc, gd, gw, act = d['nc'], d['depth_multiple'], d['width_multiple'], d.get('activation') if act: Conv.default_act = eval(act) # redefine default activation, i.e. Conv.default_act = nn.SiLU() if verbose: LOGGER.info(f"{colorstr('activation:')} {act}") # print ch = [ch] layers, save, c2 = [], [], ch[-1] # layers, savelist, ch out for i, (f, n, m, args) in enumerate(d['backbone'] + d['head']): # from, number, module, args m = eval(m) if isinstance(m, str) else m # eval strings for j, a in enumerate(args): # TODO: re-implement with eval() removal if possible # args[j] = (locals()[a] if a in locals() else ast.literal_eval(a)) if isinstance(a, str) else a with contextlib.suppress(NameError): args[j] = eval(a) if isinstance(a, str) else a # eval strings n = n_ = max(round(n * gd), 1) if n > 1 else n # depth gain if m in (Classify, Conv, ConvTranspose, GhostConv, Bottleneck, GhostBottleneck, SPP, SPPF, DWConv, Focus, BottleneckCSP, C1, C2, C2f, C3, C3TR, C3Ghost, nn.ConvTranspose2d, DWConvTranspose2d, C3x): c1, c2 = ch[f], args[0] if c2 != nc: # if c2 not equal to number of classes (i.e. for Classify() output) c2 = make_divisible(c2 * gw, 8) args = [c1, c2, *args[1:]] if m in (BottleneckCSP, C1, C2, C2f, C3, C3TR, C3Ghost, C3x): args.insert(2, n) # number of repeats n = 1 elif m is nn.BatchNorm2d: args = [ch[f]] elif m is Concat: c2 = sum(ch[x] for x in f) elif m in (Detect, Segment): args.append([ch[x] for x in f]) if m is Segment: args[2] = make_divisible(args[2] * gw, 8) elif m is CBAM: """ ch[f]:上一层的 args[0]:第0个参数 c1:输入通道数 c2:输出通道数 """ c1, c2 = ch[f], args[0] # print("ch[f]:",ch[f]) # print("args[0]:",args[0]) # print("args:",args) # print("c1:",c1) # print("c2:",c2) if c2 != nc: # if c2 not equal to number of classes (i.e. for Classify() output) c2 = make_divisible(c2 * gw, 8) args = [c1,*args[1:]] else: c2 = ch[f] m_ = nn.Sequential(*(m(*args) for _ in range(n))) if n > 1 else m(*args) # module t = str(m)[8:-2].replace('__main__.', '') # module type m.np = sum(x.numel() for x in m_.parameters()) # number params m_.i, m_.f, m_.type = i, f, t # attach index, 'from' index, type if verbose: LOGGER.info(f'{i:>3}{str(f):>20}{n_:>3}{m.np:10.0f} {t:<45}{str(args):<30}') # print save.extend(x % i for x in ([f] if isinstance(f, int) else f) if x != -1) # append to savelist layers.append(m_) if i == 0: ch = [] ch.append(c2) return nn.Sequential(*layers), sorted(save)注意传入的参数为上一层输出,要注意CBAM模块的参数和传入参数的对应。读者可以自行print比较。

models文件夹

返回上一级目录,进入models文件夹。

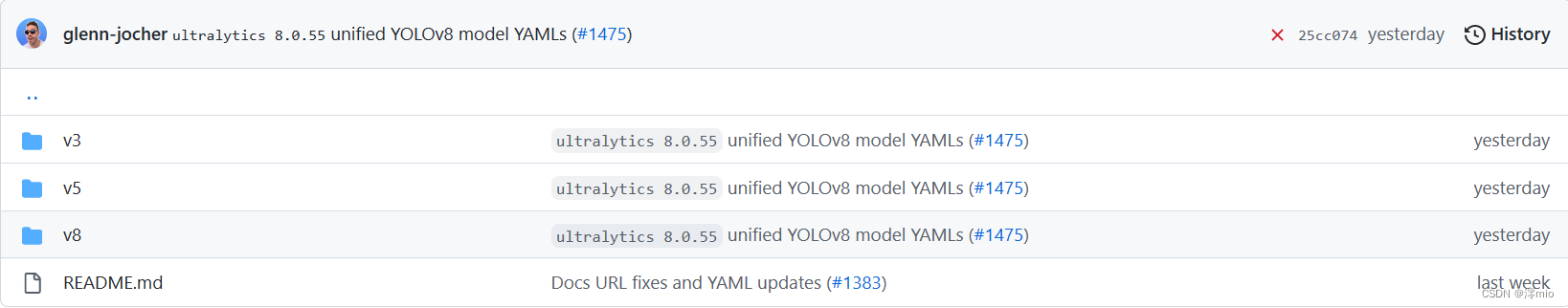

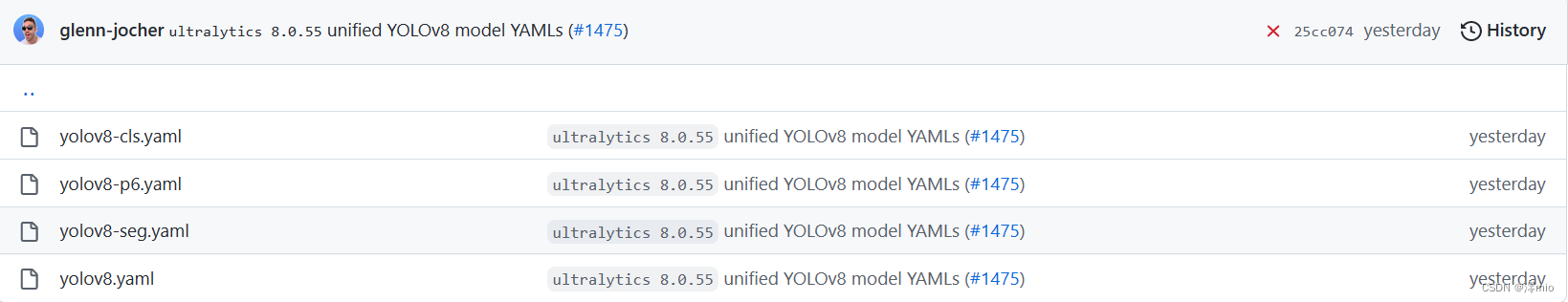

可以看到该文件夹中还有v5、v3对应的模型配置文件,所以也可以使用该包进行v5和v3的训练。 进入v8文件夹

进入v8文件夹

打开对应的yolov8.yaml,如下所示。该文件是V8对应的配置文件,里面包括了类别数,模型大小(n,s,m,l,x),backbone和head。

# Ultralytics YOLO ?, GPL-3.0 license# YOLOv8 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect# Parametersnc: 80 # number of classesscales: # model compound scaling constants, i.e. 'model=yolov8n.yaml' will call yolov8.yaml with scale 'n' # [depth, width, max_channels] n: [0.33, 0.25, 1024] # YOLOv8n summary: 225 layers, 3157200 parameters, 3157184 gradients, 8.9 GFLOPs s: [0.33, 0.50, 1024] # YOLOv8s summary: 225 layers, 11166560 parameters, 11166544 gradients, 28.8 GFLOPs m: [0.67, 0.75, 768] # YOLOv8m summary: 295 layers, 25902640 parameters, 25902624 gradients, 79.3 GFLOPs l: [1.00, 1.00, 512] # YOLOv8l summary: 365 layers, 43691520 parameters, 43691504 gradients, 165.7 GFLOPs x: [1.00, 1.25, 512] # YOLOv8x summary: 365 layers, 68229648 parameters, 68229632 gradients, 258.5 GFLOPs# YOLOv8.0n backbonebackbone: # [from, repeats, module, args] - [-1, 1, Conv, [64, 3, 2]] # 0-P1/2 - [-1, 1, Conv, [128, 3, 2]] # 1-P2/4 - [-1, 3, C2f, [128, True]] - [-1, 1, Conv, [256, 3, 2]] # 3-P3/8 - [-1, 6, C2f, [256, True]] - [-1, 1, Conv, [512, 3, 2]] # 5-P4/16 - [-1, 6, C2f, [512, True]] - [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32 - [-1, 3, C2f, [1024, True]] - [-1, 1, SPPF, [1024, 5]] # 9# YOLOv8.0n headhead: - [-1, 1, nn.Upsample, [None, 2, 'nearest']] - [[-1, 6], 1, Concat, [1]] # cat backbone P4 - [-1, 3, C2f, [512]] # 12 - [-1, 1, nn.Upsample, [None, 2, 'nearest']] - [[-1, 4], 1, Concat, [1]] # cat backbone P3 - [-1, 3, C2f, [256]] # 15 (P3/8-small) - [-1, 1, Conv, [256, 3, 2]] - [[-1, 12], 1, Concat, [1]] # cat head P4 - [-1, 3, C2f, [512]] # 18 (P4/16-medium) - [-1, 1, Conv, [512, 3, 2]] - [[-1, 9], 1, Concat, [1]] # cat head P5 - [-1, 3, C2f, [1024]] # 21 (P5/32-large) - [[15, 18, 21], 1, Detect, [nc]] # Detect(P3, P4, P5)我们复制一份,以yolov8x为例,并改名为myyolo.yaml

# Ultralytics YOLO ?, GPL-3.0 license# Parametersnc: 80 # number of classesdepth_multiple: 1.00 # scales module repeatswidth_multiple: 1.25 # scales convolution channels# YOLOv8.0x backbonebackbone: # [from, repeats, module, args] - [-1, 1, Conv, [64, 3, 2]] # 0-P1/2 - [-1, 1, Conv, [128, 3, 2]] # 1-P2/4 - [-1, 3, C2f, [128, True]] - [-1, 3, CBAM, [128,7]] - [-1, 1, Conv, [256, 3, 2]] # 3-P3/8 - [-1, 6, C2f, [256, True]] - [-1, 1, Conv, [512, 3, 2]] # 5-P4/16 - [-1, 6, C2f, [512, True]] - [-1, 1, Conv, [512, 3, 2]] # 7-P5/32 - [-1, 3, C2f, [512, True]] - [-1, 1, SPPF, [512, 5]] # 9 - [-1, 3, CBAM, [512,7]]# YOLOv8.0x headhead: - [-1, 1, nn.Upsample, [None, 2, 'nearest']] - [[-1, 6], 1, Concat, [1]] # cat backbone P4 - [-1, 3, C2f, [512]] # 12 - [-1, 1, nn.Upsample, [None, 2, 'nearest']] - [[-1, 4], 1, Concat, [1]] # cat backbone P3 - [-1, 3, C2f, [256]] # 15 (P3/8-small) - [-1, 1, Conv, [256, 3, 2]] - [[-1, 12], 1, Concat, [1]] # cat head P4 - [-1, 3, C2f, [512]] # 18 (P4/16-medium) - [-1, 1, Conv, [512, 3, 2]] - [[-1, 9], 1, Concat, [1]] # cat head P5 - [-1, 3, C2f, [512]] # 21 (P5/32-large) - [[15, 18, 21], 1, Detect, [nc]] # Detect(P3, P4, P5)我们在SPPF模块后添加一层CBAM模块,参数为[512,7],7为SpatialAttention对应的卷积核大小,值可为3或7,其他会报错。

添加完后使用对应的yaml配置文件训练即可。

yolo task=detect mode=train model=myyolo.yaml data=datasets/data/MOT20Det/VOC2007/mot20.yaml batch=32 epochs=80 imgsz=640 workers=16 device=\'0,1,2,3\'值得注意的是,如果添加了多层CBAM模块,可能会导致各个模块对应的层数改变,因此需要同时修改head中各个layer from对应的层数。

初始YOLOV8X默认的层数如下

# 默认# 0 -1 1 2320 ultralytics.nn.modules.Conv [3, 80, 3, 2] # 1 -1 1 115520 ultralytics.nn.modules.Conv [80, 160, 3, 2] # 2 -1 3 436800 ultralytics.nn.modules.C2f [160, 160, 3, True] # 3 -1 1 461440 ultralytics.nn.modules.Conv [160, 320, 3, 2] # 4 -1 6 3281920 ultralytics.nn.modules.C2f [320, 320, 6, True] # 5 -1 1 1844480 ultralytics.nn.modules.Conv [320, 640, 3, 2] # 6 -1 6 13117440 ultralytics.nn.modules.C2f [640, 640, 6, True] # 7 -1 1 3687680 ultralytics.nn.modules.Conv [640, 640, 3, 2] # 8 -1 3 6969600 ultralytics.nn.modules.C2f [640, 640, 3, True] # 9 -1 1 1025920 ultralytics.nn.modules.SPPF [640, 640, 5] # 10 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest'] # 11 [-1, 6] 1 0 ultralytics.nn.modules.Concat [1] # 12 -1 3 7379200 ultralytics.nn.modules.C2f [1280, 640, 3] # 13 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest'] # 14 [-1, 4] 1 0 ultralytics.nn.modules.Concat [1] # 15 -1 3 1948800 ultralytics.nn.modules.C2f [960, 320, 3] # 16 -1 1 922240 ultralytics.nn.modules.Conv [320, 320, 3, 2] # 17 [-1, 12] 1 0 ultralytics.nn.modules.Concat [1] # 18 -1 3 7174400 ultralytics.nn.modules.C2f [960, 640, 3] # 19 -1 1 3687680 ultralytics.nn.modules.Conv [640, 640, 3, 2] # 20 [-1, 9] 1 0 ultralytics.nn.modules.Concat [1] # 21 -1 3 7379200 ultralytics.nn.modules.C2f [1280, 640, 3] # 22 [15, 18, 21] 1 8795008 ultralytics.nn.modules.Detect [80, [320, 640, 640]] 增加对应的模块后,之后的层数的layer+1,因此需要适当更改,不然会报concat维度不匹配的错误,如下

RuntimeError: Sizes of tensors must match except in dimension 1. Expected size 16 but got size 32 for tensor number 1 in the list.总结

添加注意力模块只需要3步

1、在对应的modules.py中添加需要的模块

2、在task.py中引入modules.py中的模块,并进行适当的参数匹配

3、修改对应的models文件夹中的yaml文件,并注意层数问题。

之后就可以进行正常训练了