一、池化层

1.1 池化层原理

① 最大池化层有时也被称为下采样。

② dilation为空洞卷积,如下图所示。

③ Ceil_model为当超出区域时,只取最左上角的值。

④ 池化使得数据由5 * 5 变为3 * 3,甚至1 * 1的,这样导致计算的参数会大大减小。例如1080P的电影经过池化的转为720P的电影、或360P的电影后,同样的网速下,视频更为不卡。

1.2 池化层处理数据

import torchfrom torch import nn from torch.nn import MaxPool2dinput = torch.tensor([[1,2,0,3,1], [0,1,2,3,1], [1,2,1,0,0], [5,2,3,1,1], [2,1,0,1,1]], dtype = torch.float32)input = torch.reshape(input,(-1,1,5,5)) print(input.shape)class MyModule(nn.Module): def __init__(self): super(MyModule, self).__init__() self.maxpool = MaxPool2d(kernel_size=3, ceil_mode=True) def forward(self, input): output = self.maxpool(input) return output myModule= MyModule()output =myModule(input)print(output)torch.Size([1, 1, 5, 5])tensor([[[[2., 3.], [5., 1.]]]])1.3 池化层处理图片

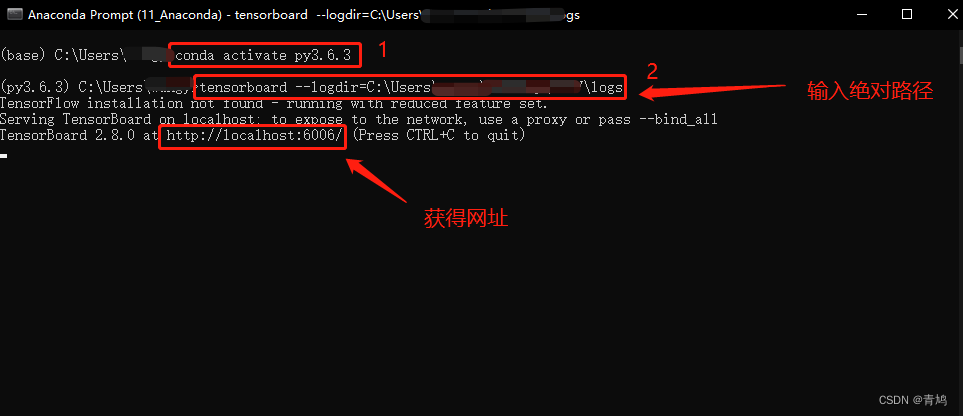

import torchimport torchvisionfrom torch import nn from torch.nn import MaxPool2dfrom torch.utils.data import DataLoaderfrom torch.utils.tensorboard import SummaryWriterdataset = torchvision.datasets.CIFAR10("./dataset",train=False,transform=torchvision.transforms.ToTensor(),download=True) dataloader = DataLoader(dataset, batch_size=64)class MyModule(nn.Module): def __init__(self): super(MyModule, self).__init__() self.maxpool = MaxPool2d(kernel_size=3, ceil_mode=True) def forward(self, input): output = self.maxpool(input) return outputmyModule = MyModule()writer = SummaryWriter("logs")step = 0for data in dataloader: imgs, targets = data writer.add_images("input", imgs, step) output = tudui(imgs) writer.add_images("output", output, step) step = step + 1Files already downloaded and verified在 Anaconda 终端里面,激活py3.6.3环境,再输入 tensorboard --logdir=C:\Users\qj\CV\logs 命令,将网址赋值浏览器的网址栏,回车,即可查看tensorboard显示日志情况。

此处不再贴图,打开显示的连接查看即可。

2. 非线性激活

inplace为原地替换,若为True,则变量的值被替换。若为False,则会创建一个新变量,将函数处理后的值赋值给新变量,原始变量的值没有修改。

import torchfrom torch import nnfrom torch.nn import ReLUinput = torch.tensor([[1,-0.5], [-1,3]])input = torch.reshape(input,(-1,1,2,2))print(input.shape)class MyModule(nn.Module): def __init__(self): super(MyModule, self).__init__() self.relu1 = ReLU() def forward(self, input): output = self.relu1(input) return output myModule= MyModule()output = myModule(input)print(output)torch.Size([1, 1, 2, 2])tensor([[[[1., 0.], [0., 3.]]]])2.1 Tensorboard显示

import torchimport torchvisionfrom torch import nn from torch.nn import ReLUfrom torch.nn import Sigmoidfrom torch.utils.data import DataLoaderfrom torch.utils.tensorboard import SummaryWriterdataset = torchvision.datasets.CIFAR10("./dataset",train=False,transform=torchvision.transforms.ToTensor(),download=True) dataloader = DataLoader(dataset, batch_size=64)class MyModule(nn.Module): def __init__(self): super(MyModule, self).__init__() self.relu1 = ReLU() self.sigmoid1 = Sigmoid() def forward(self, input): output = self.sigmoid1(input) return outputmyModule= MyModule()writer = SummaryWriter("logs")step = 0for data in dataloader: imgs, targets = data writer.add_images("input", imgs, step) output = myModule(imgs) writer.add_images("output", output, step) step = step + 1在 Anaconda 终端里面,激活py3.6.3环境,再输入 tensorboard --logdir=C:\Users\qj\CV\logs 命令,将网址赋值浏览器的网址栏,回车,即可查看tensorboard显示日志情况。此处不再贴图,打开显示的连接查看即可。