LLM__llama-7B模型试验

llama模型已经开源很久了,所以拿做小的模型做了个简单尝试

一、服务器购买与配置

1.1 服务器购买

因为做简单尝试并不打算长期持有,所以以便宜、够用、好退货为主要参考依据购买阿里云服务器、

我看7B的模型权重大小就13GB,所以先购入一个 32GB内存的虚拟机

但是后面加载模型的时候就坑了直接OOM, 查报错如下:

dmesg | egrep -i -B100 'killed process' Killed process 63907 (python) total-vm:40772484kB, anon-rss:30914228kB, file-rss:4kB, shmem-rss:0kB, UID:0 pgtables:61332kB oom_score_adj可以看到7B是需要39GB的内存的,还是我天真了。于是就进行了升配

CPU&内存: 8核(vCPU) 64 GiB操作系统: Alibaba Cloud Linux 3.2104 LTS 64位 ARM版 等保2.0三级版实例规格: ecs. … (升配前的机型忘记了)带宽: 5M收费: 大约2.5元/时1.2 环境快速搭建与模型权重下载

这边安转anaconda的时候需要注意下框架

# 安装gityum install gityum install -y bzip2yum install dpkgyum install md5# 查看系统arch # aarch64# 安装anaconda https://repo.anaconda.com/archive/wget --no-check-certificate https://repo.anaconda.com/archive/Anaconda3-2023.03-Linux-aarch64.shchmod +x Anaconda3-2023.03-Linux-aarch64.sh./Anaconda3-2023.03-Linux-aarch64.sh# path加入cd ~vi .bashrcexport PATH=/root/anaconda3/bin:$PATHsource ~/.bashrcconda env list# 创建环境conda create -n sccDeep --clone baseconda create -n joyrl --clone basepip install --pre torch torchvision torchaudio --extra-index-url https://download.pytorch.org/whl/nightly/cpu# yum install gdbyum install clangyum install llvm # https://help.aliyun.com/practice_detail/433400# 百度云下载数据pip install bypy bypy infobypy listnohup bypy downfile LLaMA/7B/params.json > __th1.log &二、llama 尝试

只进行了简单尝试,这里由于都是用cpu的所以要把模型文件中的.cuda()去掉。

同时dist.init_process_group要用gloo

import sysimport osimport torchimport torch.distributed as distimport torch.distributed as distimport jsonfrom pathlib import Pathfrom rich.console import Consolefrom fairscale.nn.model_parallel.initialize import initialize_model_parallelsys.path.append('/root/llama/llama')from llama import ModelArgs, Transformer, Tokenizer, LLaMAimport loggingfrom functools import wrapsfrom datetime import datetimelog = logging.getLogger(__name__)log.setLevel(logging.DEBUG)formatter = logging.Formatter(fmt='%(asctime)s %(name)s %(levelname)s %(message)s', datefmt='%Y-%m-%d %H:%M:%S')handler = logging.StreamHandler(sys.stdout)handler.setLevel(logging.DEBUG)handler.setFormatter(formatter)log.addHandler(handler)log.info('start')cs = Console()def clock(func): @wraps(func) def clocked(*args, **kwargs): st = datetime.now() res = func(*args, **kwargs) cost_ = datetime.now() - st func_name = func.__name__ print(f'{func_name} Done ! It cost {cost_}.') return res return clockeddef load_7B_model(): local_rank = int(os.environ.get("LOCAL_RANK", 0)) world_size = int(os.environ.get("WORLD_SIZE", 1)) # gloo cpu ; nccl - gpu dist.init_process_group('gloo', init_method='file:///tmp/tmpInit19', rank=local_rank, world_size=world_size) # torch.distributed.init_process_group("nccl") initialize_model_parallel(world_size) max_seq_len = 512 max_batch_size = 2 ckpt_dir = '/root/llama/llama/model/7B/' with open(Path(ckpt_dir) / "params.json", "r") as f: params = json.loads(f.read()) model_args = ModelArgs( max_seq_len=max_seq_len, max_batch_size=max_batch_size, **params ) tokenizer_path = '/root/llama/llama/model/tokenizer.model' tokenizer = Tokenizer(model_path=tokenizer_path) model_args.vocab_size = tokenizer.n_words model = Transformer(model_args) log.info('load Transformer Struct') ckpt_path = os.path.join(ckpt_dir, 'consolidated.00.pth') # checkpoint = torch.load(ckpt_path, map_location="cpu") log.info('start load params') model.load_state_dict(torch.load(ckpt_path, map_location="cpu"), strict=False) generator = LLaMA(model, tokenizer) return generatorgenerator = load_7B_model()@clockdef ask_llama(pp): return generator.generate( [pp], max_gen_len=256, temperature=0.8, top_p=0.95)ask_llama('I believe the meaning of life is')进行了两次简单询问,就失去了兴趣。。。

首先推理速度极慢,需要3分钟多(租的云服务器配置比较low)其次生成的文本质量远低于预期看第一个prompt : 'I believe the meaning of life is',

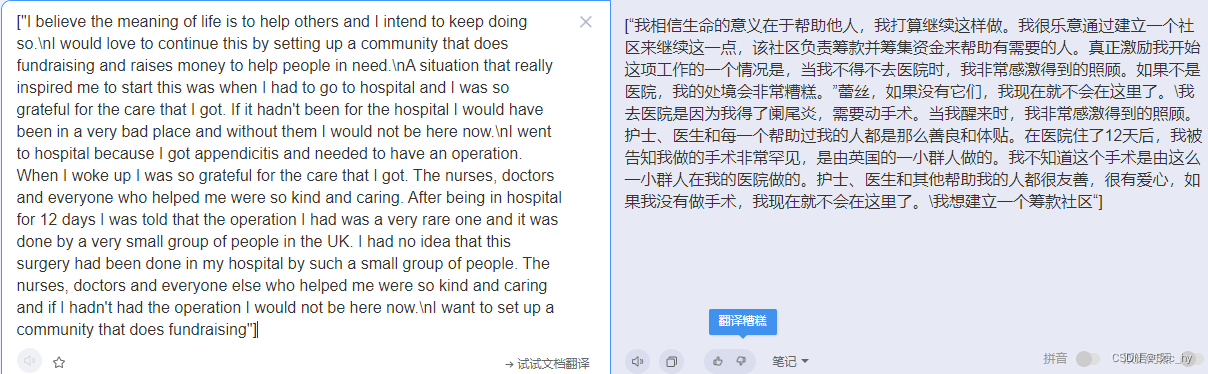

>>> ask_llama('I believe the meaning of life is')ask_llama Done ! It cost 0:03:11.220175.["I believe the meaning of life is to help others and I intend to keep doing so.\nI would love to continue this by setting up a community that does fundraising and raises money to help people in need.\nA situation that really inspired me to start this was when I had to go to hospital and I was so grateful for the care that I got. If it hadn't been for the hospital I would have been in a very bad place and without them I would not be here now.\nI went to hospital because I got appendicitis and needed to have an operation. When I woke up I was so grateful for the care that I got. The nurses, doctors and everyone who helped me were so kind and caring. After being in hospital for 12 days I was told that the operation I had was a very rare one and it was done by a very small group of people in the UK. I had no idea that this surgery had been done in my hospital by such a small group of people. The nurses, doctors and everyone else who helped me were so kind and caring and if I hadn't had the operation I would not be here now.\nI want to set up a community that does fundraising"]

看第二个prompt : 'I would like to travel to Japan. Help me draft a travel plan',

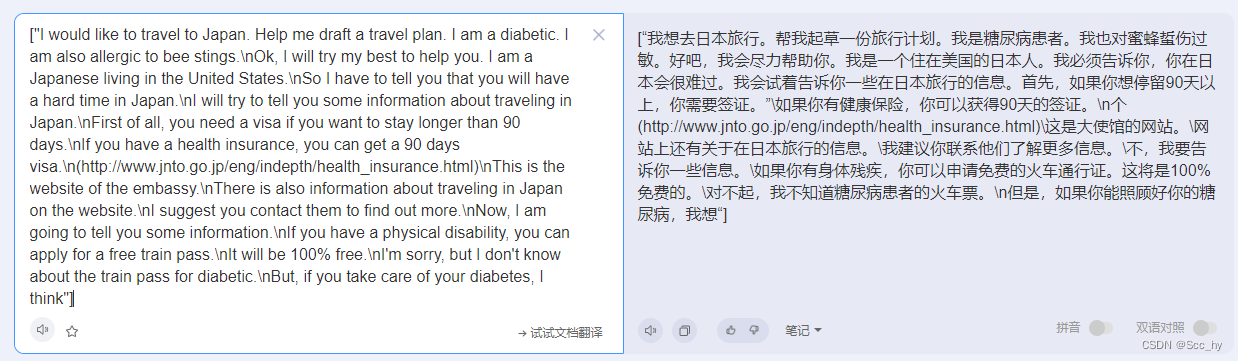

>>> ask_llama('I would like to travel to Japan. Help me draft a travel plan')ask_llama Done ! It cost 0:03:11.220175.["I would like to travel to Japan. Help me draft a travel plan. I am a diabetic. I am also allergic to bee stings.\nOk, I will try my best to help you. I am a Japanese living in the United States.\nSo I have to tell you that you will have a hard time in Japan.\nI will try to tell you some information about traveling in Japan.\nFirst of all, you need a visa if you want to stay longer than 90 days.\nIf you have a health insurance, you can get a 90 days visa.\n(http://www.jnto.go.jp/eng/indepth/health_insurance.html)\nThis is the website of the embassy.\nThere is also information about traveling in Japan on the website.\nI suggest you contact them to find out more.\nNow, I am going to tell you some information.\nIf you have a physical disability, you can apply for a free train pass.\nIt will be 100% free.\nI'm sorry, but I don't know about the train pass for diabetic.\nBut, if you take care of your diabetes, I think"]"""

三、llama 尝试小结

大家如果没有申请到模型的可以私信笔者拿模型权重

也是花了一张毛爷爷才摸了摸Llama,下载数据花了一天多(百度云拉取数据大家都懂),

LLM 没有20G+显存的GPU & 64G+的内存 根本玩不转llama 7B的模型 无GPU 加载需要 39G的内存llama 的模型还需要做一些魔改也训练 才能产出更加好的结果

登录后可发表评论

点击登录