目录

DeepSpeed配置参数 - 快速上手batch Sizeoptimizerschedulerfp16zero optimizationcsv monitor 例子

DeepSpeed配置参数 - 快速上手

DeepSpeed是微软发布的用于PyTorch的开源深度学习优化库。其主要特性是:

异构计算:ZeRO-Offload 机制同时利用 CPU 和 GPU 内存,使得在 GPU 单卡上训练 10 倍大的模型;计算加速:Sparse Attention kernel技术,支持的输入序列更长(10倍),执行速度更快(6倍),且保持精度;3D并行: 在多个 worker 之间,划分模型的各个层,借用了英伟达的 Megatron-LM,减少显存的使用量官方文档:https://deepspeed.readthedocs.io/en/latest/

配置参数文档:https://www.deepspeed.ai/docs/config-json/

这里针对几组重要的参数进行说明:

batch Size

train_batch_size = train_micro_batch_size_per_gpu * gradient_accumulation * number of GPUs.// 训练批次的大小 = 每个GPU上的微批次大小 * 几个微批次 * 几个GPUoptimizer

type:支持的有Adam, AdamW, OneBitAdam, Lamb, and OneBitLamb

其中常规的例子里用的是AdamW,也就是带L2正则化的Adam

params:参数字段填和torch里一样的参数

例如AdamW可以参考https://pytorch.org/docs/stable/optim.html#torch.optim.AdamW

// example: "optimizer": { "type": "AdamW", "params": { "lr": 3e-5, "betas": [0.8, 0.999], "eps": 1e-8, "weight_decay": 3e-7 } }scheduler

type: 支持的有LRRangeTest, OneCycle, WarmupLR, WarmupDecayLR (见https://deepspeed.readthedocs.io/en/latest/schedulers.html)

fp16

NVIDIA 的 Apex 包的混合精度/FP16 训练的配置(Apex还提供了amp模式,也可以使用,但在deepspeed中如果使用amp,则不能使用zero offload)

float32(FP32,单精度)使用32位二进制表示浮点数,更低精度的float16(FP16,半精度)所能表示的数字范围也更小,但是fp16的好处在于:同样的GPU显存,可以容纳更大的参数量、更多的训练数据;低精度的算力(FLOPS)可以做得更高;单位时间内,计算单元访问GPU显存上的数据可以获得更高的速度(摘自:https://zhuanlan.zhihu.com/p/601250710)

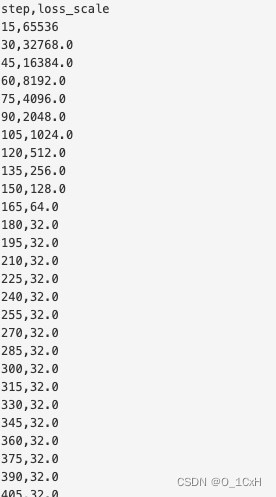

FP16的精度范围有限,训练一些模型的时候,梯度数值在FP16精度下都被表示为0,为了让这些梯度能够被FP16表示,可以在计算Loss的时候,将loss乘以一个扩大的系数loss scale,比如1024。这样,一个接近0的极小的数字经过乘法,就能过被FP16表示。这个过程发生在前向传播的最后一步,反向传播之前。loss scale有两种设置策略:

loss scale固定值,比如在[8, 32000]之间;动态调整,先将loss scale初始化为65536,如果出现上溢或下溢,在loss scale值基础上适当增加或减少。结合例子:

"fp16": { "enabled": true, "auto_cast": false, "loss_scale": 0, "initial_scale_power": 16, "loss_scale_window": 1000, "hysteresis": 2, "min_loss_scale": 1}这个配置打开了fp16,将初始的loss scale设置为2的16次方=65536,然后设置了动态调整(loss_scale=0.0使用动态调整,否则固定)

日志记录了一次训练中loss scale的变化

zero optimization

stage:zero优化有几个档位:0、1、2、3分别指禁用、优化器状态分区、优化器+梯度状态分区、优化器+梯度+参数分区。

offload_optimizer : 将优化器状态卸载到 CPU 或 NVMe,并将优化器计算卸载到 CPU,适用于 stage为 1、2、3。

offload_param : 将模型参数卸载到 CPU 或 NVMe,仅对stage = 3 有效

stage= 2 的例子:

"zero_optimization": { "stage": 2, "offload_optimizer": { "device": "cpu", "pin_memory": true }, "allgather_partitions": true, "allgather_bucket_size": 2e8, "overlap_comm": true, "reduce_scatter": true, "reduce_bucket_size": 2e8, "contiguous_gradients": true }stage = 3 的例子:

"zero_optimization": { "stage": 3, "offload_optimizer": { "device": "cpu", "pin_memory": true }, "offload_param": { "device": "cpu", "pin_memory": true }, "overlap_comm": true, "contiguous_gradients": true, "sub_group_size": 1e9, "reduce_bucket_size": "auto", "stage3_prefetch_bucket_size": "auto", "stage3_param_persistence_threshold": "auto", "stage3_max_live_parameters": 1e9, "stage3_max_reuse_distance": 1e9, "stage3_gather_16bit_weights_on_model_save": true }csv monitor

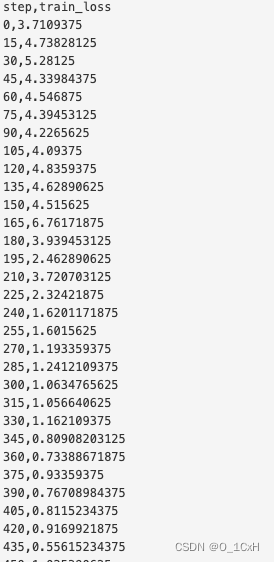

Monitor部分将训练详细信息记录到与 Tensorboard 兼容的文件、WandB 或简单的 CSV 文件中.

这是一个csv的例子:

"csv_monitor": { "enabled": true, "output_path": "output/ds_logs/", "job_name": "train_bert"}再一次训练中记录的loss值的变化

例子

最后是两个可以直接使用的stage=2 和 3 的配置文件,参数均设置了auto

{ "fp16": { "enabled": "auto", "loss_scale": 0, "loss_scale_window": 1000, "initial_scale_power": 16, "hysteresis": 2, "min_loss_scale": 1 }, "optimizer": { "type": "AdamW", "params": { "lr": "auto", "betas": "auto", "eps": "auto", "weight_decay": "auto" } }, "scheduler": { "type": "WarmupLR", "params": { "warmup_min_lr": "auto", "warmup_max_lr": "auto", "warmup_num_steps": "auto" } }, "zero_optimization": { "stage": 2, "offload_optimizer": { "device": "cpu", "pin_memory": true }, "allgather_partitions": true, "allgather_bucket_size": 2e8, "overlap_comm": true, "reduce_scatter": true, "reduce_bucket_size": 2e8, "contiguous_gradients": true }, "csv_monitor" : { "enabled": true, "job_name" : "stage2_test" }, "gradient_accumulation_steps": "auto", "gradient_clipping": "auto", "steps_per_print": 100, "train_batch_size": "auto", "train_micro_batch_size_per_gpu": "auto", "wall_clock_breakdown": false}{ "fp16": { "enabled": "auto", "loss_scale": 0, "loss_scale_window": 1000, "initial_scale_power": 16, "hysteresis": 2, "min_loss_scale": 1 }, "optimizer": { "type": "AdamW", "params": { "lr": "auto", "betas": "auto", "eps": "auto", "weight_decay": "auto" } }, "scheduler": { "type": "WarmupLR", "params": { "warmup_min_lr": "auto", "warmup_max_lr": "auto", "warmup_num_steps": "auto" } }, "zero_optimization": { "stage": 3, "offload_optimizer": { "device": "cpu", "pin_memory": true }, "offload_param": { "device": "cpu", "pin_memory": true }, "overlap_comm": true, "contiguous_gradients": true, "sub_group_size": 1e9, "reduce_bucket_size": "auto", "stage3_prefetch_bucket_size": "auto", "stage3_param_persistence_threshold": "auto", "stage3_max_live_parameters": 1e9, "stage3_max_reuse_distance": 1e9, "stage3_gather_16bit_weights_on_model_save": true }, "csv_monitor" : { "enabled": true, "job_name" : "stage3_test" }, "gradient_accumulation_steps": "auto", "gradient_clipping": "auto", "steps_per_print": 100, "train_batch_size": "auto", "train_micro_batch_size_per_gpu": "auto", "wall_clock_breakdown": false}