目录

一. 语义分割的含义

二. DeepLabV3+ 模型

三. 模型整体框架

四. 模型检测效果

五. 代码实现

六. 源码地址

一. 语义分割的含义

语义分割是计算机视觉中的基本任务,在语义分割中我们需要将视觉输入分为不同的语义可解释类别,「语义的可解释性」即分类类别在真实世界中是有意义的。例如,我们可能需要区分图像中属于汽车的所有像素,并把这些像素涂成蓝色。

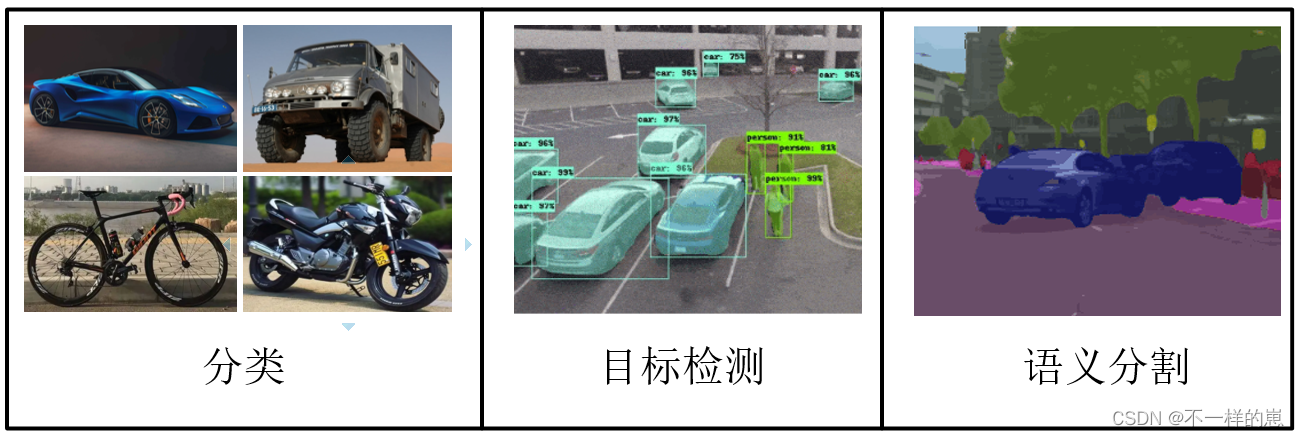

我们将 图像分类,目标检测 和 语义分割 进行对比 可以让我们更好的理解语义分割。

图像分类: 通过 提取特征,输出 待测图片趋向于某个种类

目标检测: 通过 提取特征,输出 待测图片中不同物体的位置与种类

语义分割: 通过 提取特征, 输出 待测图片的每个像素点的种类

二. DeepLabV3+ 模型

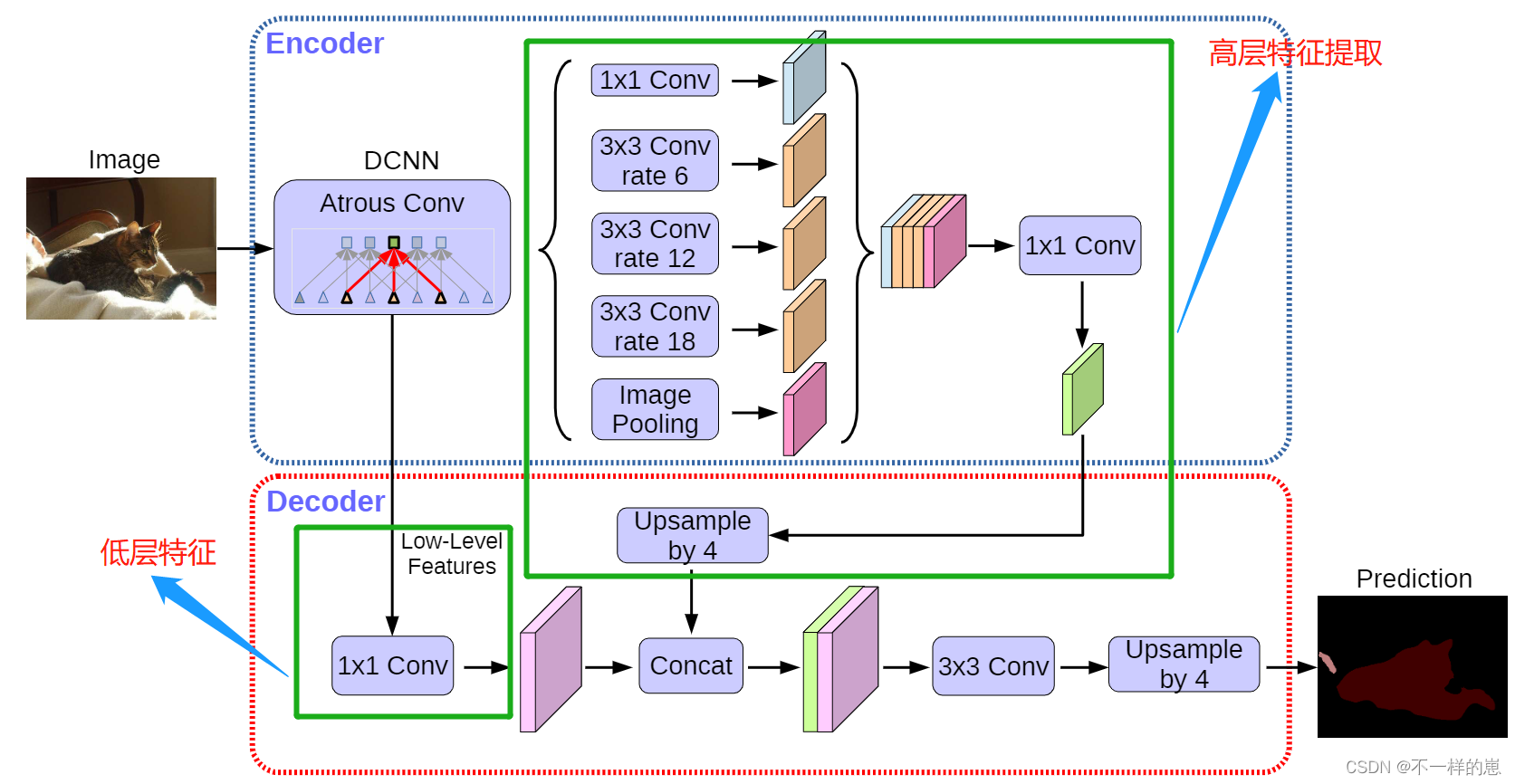

如上图, Encoder中DCNN部分代表语义分割中的主干网络, 在本文中为轻量网络MobileNetV2

特征提取分为高层语义提取和低层的语义提取两个部分。

首先 1 x 1 对通道上关联,起了一个全连接的作用,接下来是 3 个空洞卷积,有关空洞卷积参见。pooling ,然后经过 concate 将这些特征图进行组合,随后经过 1x1 卷积来改变通道大小。接下里对于底层特征图首先进行 1x1 卷积进行通道变换,这样可以拿到一些低层特征,在将上面组合变换通道数的特征图进行一次 4 倍上采样得到和低层特征图大小相同特征图后,进行组合后再进行一次 4 倍上采样。

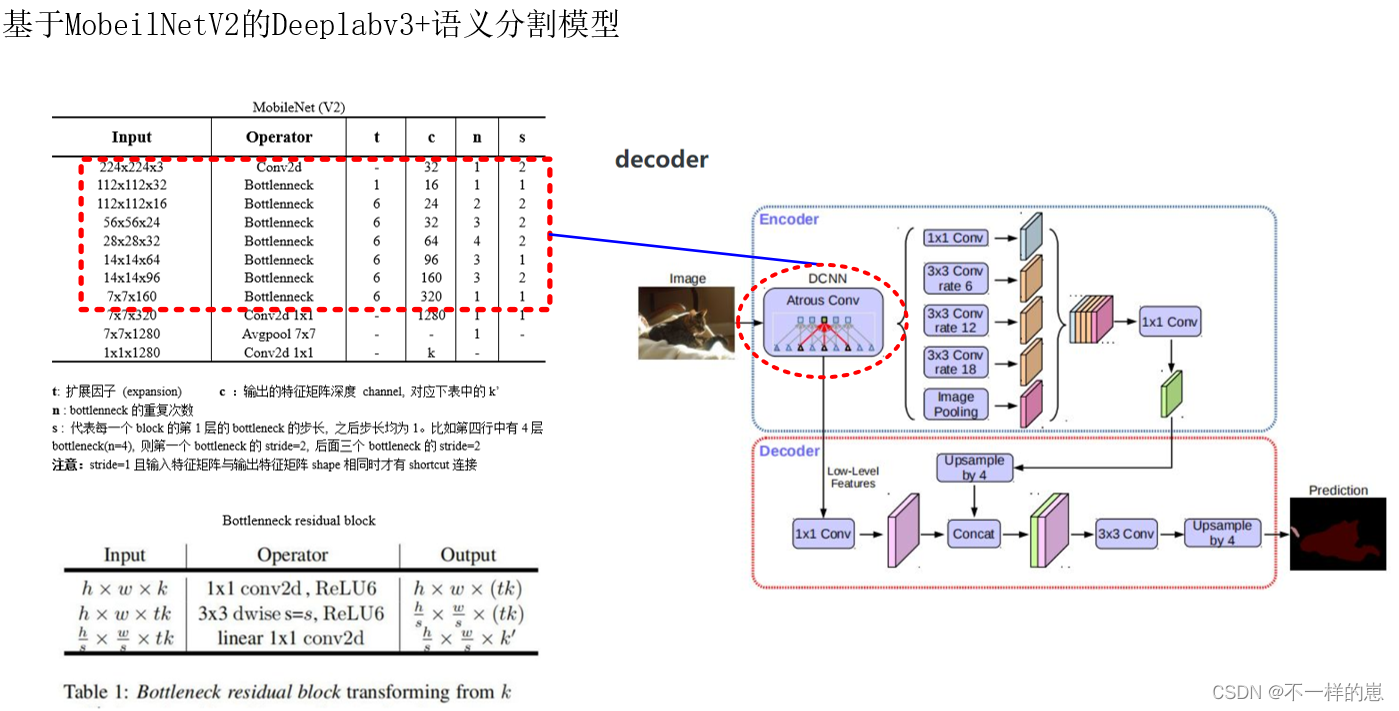

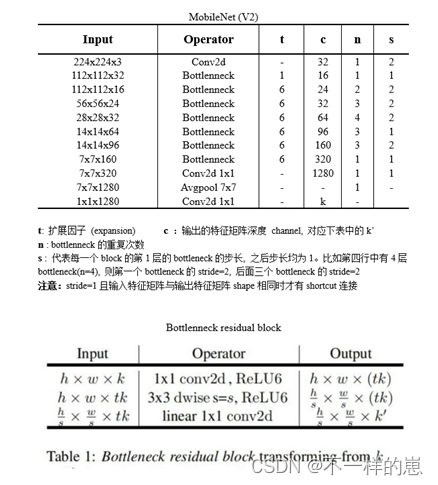

三. 模型整体框架

自2017年mobile net问世之后,研究人员就不断在追求更小,更快,更准的网络模型。在这个过程中,也发现了mobile net存在的问题:1.1.结构简单,mobile net使用类似VGG的结构,这种结构已经被证明不如resnet bottle neck结构;2.depthwise convolution的输出,在relu的作用下,很容易废掉。即输出为0,且无法恢复。针对上述问题,谷歌做了改进,也即是本文的主角,mobile net v2。mobile net v2的主要改进为引入了Inverted residual block和利用线性变换替换relu。

四. 模型检测效果

五. 代码实现

MobileV2 网络代码搭建

import mathimport osimport torchimport torch.nn as nnimport torch.utils.model_zoo as model_zooBatchNorm2d = nn.BatchNorm2d# PW、DW -> https://blog.csdn.net/qq_41895003/article/details/107408390# MobileNet V1、V2、V3 -> https://www.icode9.com/content-4-891085.htmldef conv_bn(inp, oup, stride): return nn.Sequential( nn.Conv2d(inp, oup, 3, stride, 1, bias=False), BatchNorm2d(oup), nn.ReLU6(inplace=True) )def conv_1x1_bn(inp, oup): return nn.Sequential( nn.Conv2d(inp, oup, 1, 1, 0, bias=False), BatchNorm2d(oup), nn.ReLU6(inplace=True) )# 深度可分离卷积(Depthwise Separable Convolution)# 一层深度卷积(Depthwise Convolution,DW)与一层逐点卷积(Pointwise Convolution,PW)组合# 倒残差结构Block PW升维 -> DW -> PW降维# 在 深度可分离卷积(DW + PW降维) 前加一层 PW# rate为卷积膨胀系数 若rate>1 则为膨胀卷积(空洞卷积)# nn.Conv2d(in_channels, out_channels, kernel_size, stride=1,padding=0, dilation=1, groups=1,bias=True):class InvertedResidual(nn.Module): def __init__(self, inp, oup, stride, expand_ratio): super(InvertedResidual, self).__init__() self.stride = stride assert stride in [1, 2] # assert in 断言, 若stride不在[1, 2]中则报错 hidden_dim = round(inp * expand_ratio) self.use_res_connect = self.stride == 1 and inp == oup # --------------------------------------------# # 深度可分离卷积 # 第一部分:DW, groups = 输出通道数 = 输入通道数, 当group = 1 时 即为普通卷积 # 第二部分:PW, 利用1×1的卷积更改输出通道数 # --------------------------------------------# if expand_ratio == 1: self.conv = nn.Sequential( #--------------------------------------------# # 进行3x3的逐层卷积,进行跨特征点的特征提取 #--------------------------------------------# nn.Conv2d(hidden_dim, hidden_dim, 3, stride, 1, groups=hidden_dim, bias=False), BatchNorm2d(hidden_dim), nn.ReLU6(inplace=True), #-----------------------------------# # 利用1x1卷积进行通道数的调整 #-----------------------------------# nn.Conv2d(hidden_dim, oup, 1, 1, 0, bias=False), BatchNorm2d(oup), ) else: self.conv = nn.Sequential( #-----------------------------------# # 利用1x1卷积进行通道数的上升 #-----------------------------------# nn.Conv2d(inp, hidden_dim, 1, 1, 0, bias=False), BatchNorm2d(hidden_dim), nn.ReLU6(inplace=True), #--------------------------------------------# # 进行3x3的逐层卷积,进行跨特征点的特征提取 #--------------------------------------------# nn.Conv2d(hidden_dim, hidden_dim, 3, stride, 1, groups=hidden_dim, bias=False), BatchNorm2d(hidden_dim), nn.ReLU6(inplace=True), #-----------------------------------# # 利用1x1卷积进行通道数的下降 #-----------------------------------# nn.Conv2d(hidden_dim, oup, 1, 1, 0, bias=False), BatchNorm2d(oup), ) def forward(self, x): if self.use_res_connect: return x + self.conv(x) else: return self.conv(x)class MobileNetV2(nn.Module): def __init__(self, n_class=1000, input_size=224, width_mult=1.): super(MobileNetV2, self).__init__() block = InvertedResidual input_channel = 32 last_channel = 1280 interverted_residual_setting = [ # t, c, n, s [1, 16, 1, 1], # 256, 256, 32 -> 256, 256, 16 [6, 24, 2, 2], # 256, 256, 16 -> 128, 128, 24 2 [6, 32, 3, 2], # 128, 128, 24 -> 64, 64, 32 4 [6, 64, 4, 2], # 64, 64, 32 -> 32, 32, 64 7 [6, 96, 3, 1], # 32, 32, 64 -> 32, 32, 96 [6, 160, 3, 2], # 32, 32, 96 -> 16, 16, 160 14 [6, 320, 1, 1], # 16, 16, 160 -> 16, 16, 320 ] assert input_size % 32 == 0 input_channel = int(input_channel * width_mult) self.last_channel = int(last_channel * width_mult) if width_mult > 1.0 else last_channel # 512, 512, 3 -> 256, 256, 32 # 对应 nets/nets.jpg中的MobilenetV2表中的第一个Conv2d self.features = [conv_bn(3, input_channel, 2)] for t, c, n, s in interverted_residual_setting: output_channel = int(c * width_mult) # 每一个blocks中包括 n个残差block, 第一个block的步长为s, 剩下的为1 for i in range(n): if i == 0: self.features.append(block(input_channel, output_channel, s, expand_ratio=t)) else: self.features.append(block(input_channel, output_channel, 1, expand_ratio=t)) input_channel = output_channel self.features.append(conv_1x1_bn(input_channel, self.last_channel)) self.features = nn.Sequential(*self.features) self.classifier = nn.Sequential( nn.Dropout(0.2), nn.Linear(self.last_channel, n_class), ) self._initialize_weights() def forward(self, x): x = self.features(x) x = x.mean(3).mean(2) x = self.classifier(x) return x # isinstance(x, y)判断x , y是否时相同类型 ,返回bool类型 # 例如:设置一个条件,如果m为Conv2d层就为该m添加相应的参数 def _initialize_weights(self): for m in self.modules(): if isinstance(m, nn.Conv2d): n = m.kernel_size[0] * m.kernel_size[1] * m.out_channels m.weight.data.normal_(0, math.sqrt(2. / n)) if m.bias is not None: m.bias.data.zero_() elif isinstance(m, BatchNorm2d): m.weight.data.fill_(1) m.bias.data.zero_() elif isinstance(m, nn.Linear): n = m.weight.size(1) m.weight.data.normal_(0, 0.01) m.bias.data.zero_()def load_url(url, model_dir='./model_data', map_location=None): if not os.path.exists(model_dir): os.makedirs(model_dir) filename = url.split('/')[-1] cached_file = os.path.join(model_dir, filename) if os.path.exists(cached_file): return torch.load(cached_file, map_location=map_location) else: return model_zoo.load_url(url,model_dir=model_dir)def mobilenetv2(pretrained=False, **kwargs): model = MobileNetV2(n_class=1000, **kwargs) if pretrained: model.load_state_dict(load_url('https://github.com/bubbliiiing/deeplabv3-plus-pytorch/releases/download/v1.0/mobilenet_v2.pth.tar'), strict=False) return modelif __name__ == "__main__": model = mobilenetv2() for i, layer in enumerate(model.features): print(i, layer)DeepLabV3 + 网络代码搭建

import torchimport torch.nn as nnimport torch.nn.functional as Ffrom nets.xception import xceptionfrom nets.mobilenetV2 import mobilenetv2class MobileNetV2(nn.Module): def __init__(self, downsample_factor=8, pretrained=True): super(MobileNetV2, self).__init__() from functools import partial model = mobilenetv2(pretrained) # res = [0, 1, 2, 3, 4] # print(res[:-1]) # out:[0, 1, 2, 3] self.features = model.features[:-1] # [2, 4, 7, 14] 代表的是 self.features 中层的位置 self.total_idx = len(self.features) self.down_idx = [2, 4, 7, 14] if downsample_factor == 8: for i in range(self.down_idx[-2], self.down_idx[-1]): self.features[i].apply( partial(self._nostride_dilate, dilate=2) ) for i in range(self.down_idx[-1], self.total_idx): self.features[i].apply( partial(self._nostride_dilate, dilate=4) ) elif downsample_factor == 16: for i in range(self.down_idx[-1], self.total_idx): self.features[i].apply( partial(self._nostride_dilate, dilate=2) ) # dilate 膨胀系数 def _nostride_dilate(self, m, dilate): classname = m.__class__.__name__ if classname.find('Conv') != -1: if m.stride == (2, 2): m.stride = (1, 1) if m.kernel_size == (3, 3): m.dilation = (dilate // 2, dilate // 2) m.padding = (dilate // 2, dilate // 2) else: if m.kernel_size == (3, 3): m.dilation = (dilate, dilate) m.padding = (dilate, dilate) def forward(self, x): low_level_features = self.features[:4](x) x = self.features[4:](low_level_features) return low_level_features, x # -----------------------------------------## ASPP特征提取模块# 利用不同膨胀率的膨胀卷积进行特征提取# -----------------------------------------#class ASPP(nn.Module): def __init__(self, dim_in, dim_out, rate=1, bn_mom=0.1): super(ASPP, self).__init__() self.branch1 = nn.Sequential( nn.Conv2d(dim_in, dim_out, 1, 1, padding=0, dilation=rate, bias=True), nn.BatchNorm2d(dim_out, momentum=bn_mom), nn.ReLU(inplace=True), ) self.branch2 = nn.Sequential( nn.Conv2d(dim_in, dim_out, 3, 1, padding=6 * rate, dilation=6 * rate, bias=True), nn.BatchNorm2d(dim_out, momentum=bn_mom), nn.ReLU(inplace=True), ) self.branch3 = nn.Sequential( nn.Conv2d(dim_in, dim_out, 3, 1, padding=12 * rate, dilation=12 * rate, bias=True), nn.BatchNorm2d(dim_out, momentum=bn_mom), nn.ReLU(inplace=True), ) self.branch4 = nn.Sequential( nn.Conv2d(dim_in, dim_out, 3, 1, padding=18 * rate, dilation=18 * rate, bias=True), nn.BatchNorm2d(dim_out, momentum=bn_mom), nn.ReLU(inplace=True), ) self.branch5_conv = nn.Conv2d(dim_in, dim_out, 1, 1, 0, bias=True) self.branch5_bn = nn.BatchNorm2d(dim_out, momentum=bn_mom) self.branch5_relu = nn.ReLU(inplace=True) self.conv_cat = nn.Sequential( nn.Conv2d(dim_out * 5, dim_out, 1, 1, padding=0, bias=True), nn.BatchNorm2d(dim_out, momentum=bn_mom), nn.ReLU(inplace=True), ) def forward(self, x): [b, c, row, col] = x.size() # -----------------------------------------# # 一共五个分支 # -----------------------------------------# conv1x1 = self.branch1(x) conv3x3_1 = self.branch2(x) conv3x3_2 = self.branch3(x) conv3x3_3 = self.branch4(x) # -----------------------------------------# # 第五个分支,全局平均池化+卷积 # -----------------------------------------# global_feature = torch.mean(x, 2, True) global_feature = torch.mean(global_feature, 3, True) global_feature = self.branch5_conv(global_feature) global_feature = self.branch5_bn(global_feature) global_feature = self.branch5_relu(global_feature) global_feature = F.interpolate(global_feature, (row, col), None, 'bilinear', True) # -----------------------------------------# # 将五个分支的内容堆叠起来 # 然后1x1卷积整合特征 # -----------------------------------------# feature_cat = torch.cat([conv1x1, conv3x3_1, conv3x3_2, conv3x3_3, global_feature], dim=1) # 对应 nets.jpg中 encoder 右侧的 1x1 Covn # 利用1x1卷积调整通道数 # 52, 52, 1280 -> 52,52,256 result = self.conv_cat(feature_cat) return resultclass DeepLab(nn.Module): def __init__(self, num_classes, backbone="mobilenet", pretrained=False, downsample_factor=16): super(DeepLab, self).__init__() if backbone == "xception": # ----------------------------------# # 获得两个特征层 # 浅层特征 [128,128,256] # 主干部分 [30,30,2048] # ----------------------------------# self.backbone = xception(downsample_factor=downsample_factor, pretrained=pretrained) in_channels = 2048 low_level_channels = 256 elif backbone == "mobilenet": # ----------------------------------# # 获得两个特征层 # 浅层特征 [128,128,24] # 主干部分 [30,30,320] # ----------------------------------# self.backbone = MobileNetV2(downsample_factor=downsample_factor, pretrained=pretrained) in_channels = 320 low_level_channels = 24 else: raise ValueError('Unsupported backbone - `{}`, Use mobilenet, xception.'.format(backbone)) # -----------------------------------------# # ASPP特征提取模块 # 利用不同膨胀率的膨胀卷积进行特征提取 # -----------------------------------------# self.aspp = ASPP(dim_in=in_channels, dim_out=256, rate=16 // downsample_factor) # ----------------------------------# # 浅层特征边 # ----------------------------------# self.shortcut_conv = nn.Sequential( nn.Conv2d(low_level_channels, 48, 1), nn.BatchNorm2d(48), nn.ReLU(inplace=True) ) self.cat_conv = nn.Sequential( nn.Conv2d(48 + 256, 256, 3, stride=1, padding=1), nn.BatchNorm2d(256), nn.ReLU(inplace=True), nn.Dropout(0.5), nn.Conv2d(256, 256, 3, stride=1, padding=1), nn.BatchNorm2d(256), nn.ReLU(inplace=True), nn.Dropout(0.1), ) self.cls_conv = nn.Conv2d(256, num_classes, 1, stride=1) def forward(self, x): H, W = x.size(2), x.size(3) # -----------------------------------------# # 获得两个特征层 # low_level_features: 浅层特征-进行卷积处理 # x : 主干部分-利用ASPP结构进行加强特征提取 # -----------------------------------------# low_level_features, x = self.backbone(x) # mobilenetV2 返回的主干特征 进行aspp 对应nets.jpg中的 encoder # 注意返回的 主干特征是 进行到 5个层堆叠为止, 未进行后续操作 x = self.aspp(x) # mobilenetV2 返回的浅层特征 进行1x1的conv 对应nets.jpg中的 decoder中左侧的那个conv low_level_features = self.shortcut_conv(low_level_features) # -----------------------------------------# # 将加强特征边上采样 # 与浅层特征堆叠后利用卷积进行特征提取 # interpolate() 插值函数, 进行上/下采样处理 , 其中的 size 代表是输出后的 shape # -----------------------------------------# x = F.interpolate(x, size=(low_level_features.size(2), low_level_features.size(3)), mode='bilinear', align_corners=True) # 对应nets.jpg中的 decoder中的那个Concat # 48, 128, 128 + 256, 128, 128 -> 304, 128, 128 # 304, 128, 128 -> 256, 128, 128 x = self.cat_conv(torch.cat((x, low_level_features), dim=1)) # 256, 128, 128 -> 2, 128, 128 x = self.cls_conv(x) # 2, 128, 128 -> 2, 512, 512 # 将分类好的特征举证 resize成原图尺寸大小 的 特征 x = F.interpolate(x, size=(H, W), mode='bilinear', align_corners=True) return x六. 源码地址

GitHub - mcuwangzaiacm/MobileV2_DeepLabV3plus_pytorch1.2: 这是一个基于MobileV2主干的DeepLabV3plus语义分割模型基础代码,用于入门学习